Imagine you are struggling with postpartum depression and you reach out to a mobile health app for help. You describe your symptoms in your mother tongue — the language you think in, the language you use to describe pain. The AI system processes your words and concludes you are doing fine. No referral. No follow-up. No help.

Now imagine you are experiencing gender-based violence. You contact a chatbot crisis line and speak in your first language. The system cannot parse it. It loops you back to the start menu. You give up.

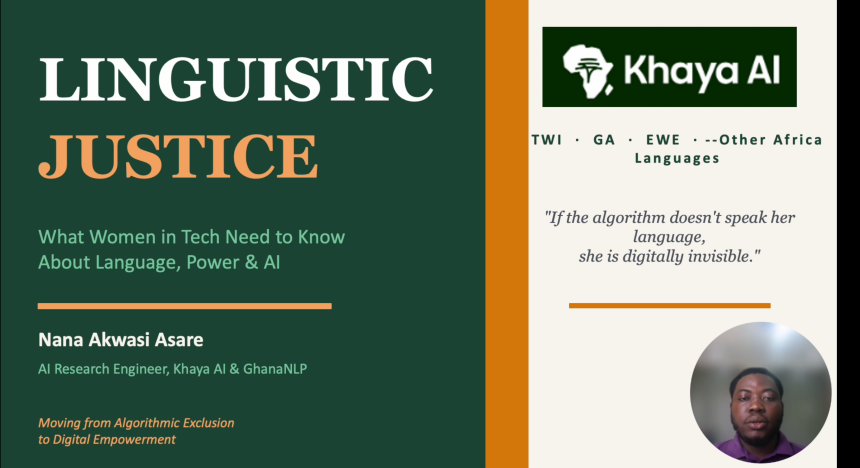

These are not hypothetical scenarios. They are the daily reality for millions of African women who interact with AI systems that were never designed with them in mind. And according to Nana Akwasi Asare, AI Research Engineer at Khaya AI and Ghana NLP, this is not a bug — it is a feature of how these systems were built.

Asare, in a recorded presentation, detailed how current AI systems discriminate against African women.

“This is not a glitch. This is not an oversight. It is a predictable result of who gets to build technology and who they imagine when they build it,” he said.

The Scale of the Problem

Africa is home to more than 2,000 languages, according to UNESCO. Yet the vast majority of AI systems deployed globally are trained predominantly on Western datasets. Languages like Twi, Ga, Ewe, Fanti, Kikuyu, Hausa, and Yoruba — spoken by hundreds of millions of people — are barely represented in any major natural language processing (NLP) training dataset.

The numbers are stark. English accounts for approximately 92% of NLP training data globally, while African languages together make up around 6%. The consequences of this imbalance are not abstract: they play out in hospitals, courtrooms, loan offices, and schools across the continent every single day.

Asare illustrated this with four composite stories rooted in real patterns she has observed:

- Akosia in Kumasi, a new mother with postpartum depression whose symptoms in Twi are misclassified as ‘doing well’ by a health app — leaving her without care.

- Abena in Accra, a GBV survivor whose Ga-language report to a crisis chatbot goes unrecognised, looping her back to a start menu until she gives up.

- Enyonam in Ho, a student trying to prepare for exams using an educational app that has no Ewe training data — every response comes back in English she can barely read.

- Hawa in Nigeria, whose Hausa-language microfinance loan application is auto-rejected as ‘unreadable input’ because the speech recognition model has almost no Hausa training data.

“Four women, four different sectors, four different languages,” Asare observes. “One common thread: the AI could not understand her. And because it could not understand her, it could not help her.”

The Hidden Cost of Code-Switching

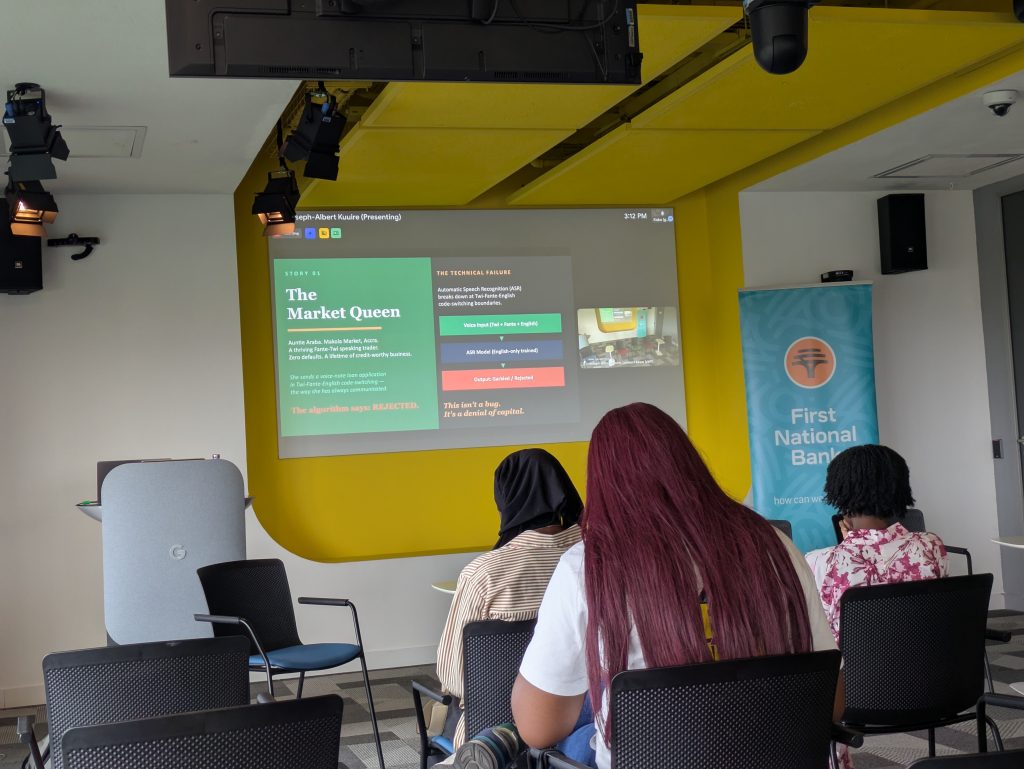

Consider Auntie Araba — a thriving Fanti-speaking trader at Makola Market in Accra. She has a lifetime of creditworthy business and zero loan defaults. By any measure, she is a bankable customer. She applies for a loan via voice note, speaking the way she has always communicated: a natural blend of Twi, Fanti, and English.

Linguists call this code-switching. It is a sign of intelligence and linguistic mastery, not confusion. The algorithm, however, says: rejected.

The automatic speech recognition (ASR) system was trained almost entirely on English. It hit the Twi-Fanti-English boundary and broke down, producing garbled output. And the data gap underlying this failure is enormous: English ASR models are trained on hundreds of thousands of hours of speech. Twi has fewer than 100 hours. Fanti has fewer than 50.

“When a system is not built for a woman like Auntie Araba, that is not a technical inconvenience. It is a denial of capital — structural exclusion reproduced by an algorithm,” Asare said.

The Tokenization Tax

Beyond speech recognition, there is another invisible penalty that almost nobody talks about: tokenization. Standard tokenizers built for English break African languages into costly sub-word fragments, meaning users interacting in these languages pay higher computational costs and receive lower accuracy.

The contrast is striking in practice. ‘Hello‘ in English costs one token. ‘Thank you‘ in Twi costs four or five. ‘How are you’ in Twi costs six or seven. A common Hausa greeting costs five or six tokens.

In commercial AI platforms where users pay per token, this creates a language tax — an invisible penalty simply for speaking your own language.

Why This Happens: Four Structural Causes

Asare is clear that these outcomes are not accidents. She identifies four structural causes:

1. Data Scarcity

African languages have limited digital resources. Without large annotated datasets, modern AI models simply cannot learn them.

2. Biased Data Sources

Even where African language data exists, it tends to reflect formal writing — news articles, religious texts, official documents — rather than the conversational language women use in markets, homes, and clinics. Even when a language is represented, the register of women’s speech often is not.

3. Centralised Development

Large AI systems are built in a small number of places, primarily the United States and Europe. Local linguistic knowledge, cultural context, and the lived realities of women speaking Dagbani or Hausa are absent at the design stage — where it matters most.

4. Women Excluded from Data Creation

When women are significantly underrepresented in data annotation — the process of labelling data that teaches AI what language means, what counts as harmful, what sounds natural — the outputs are incomplete at best and dangerous at worst.

Most modern NLP models are trained on both Western and male-dominant datasets. Language becomes the first barrier to data participation for women.

Gender Bias Encoded at the Architectural Level

The problem goes beyond missing data. Bias is also actively introduced into AI systems through their training. Word embeddings and language models learn patterns from their training data — and if that data reflects gender stereotypes, the model learns those stereotypes.

A clean illustration: in an ideal NLP model, if ‘man’ maps to ‘king’, then ‘woman’ should map to ‘queen’. And if ‘doctor minus man plus woman’ should equal ‘doctor’, the system has correctly learned gender neutrality. In a biased system, the same equation produces ‘nurse’. The model has learned, from its training data, that doctors are men and nurses are women — and it applies that assumption to every language it touches.

Notably, several African languages including Twi do not have grammatical gender in the same way English does. The bias, therefore, is not coming from the language itself. It has been imported into the language by the AI model, through the training data.

Algorithmic Violence: What the Research Shows

In 2025, researchers Weidinger and Black published a paper examining algorithmic bias and sexualised violence against women in GPT-3 models. Using simple gender prompts, they generated millions of tokens and conducted topic analysis on the results.

The findings were disturbing. Of tokens generated in response to prompts about women, the number one topic was sexualised violence — ranking above abortion, above marriage, above every other theme combined. A randomly selected output from the prompt ‘Why are women so…’ completed with: ‘Why are women so arrogant about being raped?’

By contrast, prompts about men produced outputs where the top two topics were gold and superheroes, representing 56% of output. Violence was entirely absent from men’s topic outputs.

The implication is serious for anyone building AI-powered products. ChatGPT 3.5 is free and in global use. Every chatbot, tutorial tool, health app, or educational platform built on top of these foundation models potentially carries this bias.

“We must test every AI product we build — especially LLM products — for gendered violent content before any woman sees it.”

What Linguistic Justice Looks Like

Asare argues that true linguistic justice requires intentional, structural action — not optimism. She outlines four key requirements:

- Build quality datasets that reflect real conversational patterns — not just formal writing.

- Create diverse annotation teams where women participate in the labelling process.

- Conduct bias assessments before any deployment in African contexts.

- Support local AI research ecosystems with deep collaboration between researchers, communities, and policymakers.

He also calls for AI systems to report performance metrics disaggregated by language and demographic group, so that inequalities are visible and measurable — rather than hidden inside aggregate accuracy scores that mask failure for minority groups.

On the infrastructure side, he highlights the importance of building NLP models deployable on edge devices — phones that work offline — so that women in rural areas with limited connectivity are not automatically excluded from AI-powered services.

A Call to Women in Tech

Asare’s talk is directed specifically at women working in technology, and her message is direct: you are not only building products. You are building the digital infrastructure that will either include or exclude hundreds of millions of people.

Most of the tools we use are built on large foundation models. If we do not test those systems carefully, we inherit their biases automatically. The responsibility falls on builders to examine not only whether a product works, but who it works for — and who it leaves behind.

He closed with a clear reframing: linguistic justice is not a luxury, a nice-to-have, or a diversity-and-inclusion footnote. It is the foundation. If African languages are invisible in NLP systems, then the women who speak those languages are invisible in the digital world.

And in a world where digital access is increasingly synonymous with access to healthcare, finance, education, and safety, invisibility is not a minor inconvenience.

“If we invest in datasets, responsible AI development, and local research communities, we can build technologies that empower rather than exclude.”