By the time most people interact with an AI system — applying for a job, seeking a loan, passing through a border — a set of assumptions has already been made about them. For women, and especially women in the Global South, those assumptions are disproportionately wrong, harmful, and invisible.

Dr. Aurelia Ayisi of the University of Ghana came to the Tech Labari Women and AI event at the Google AI Community Center in Accra with evidence, a framework, and an argument: that the justice gap in AI is not an accident of technology — it is a consequence of choices, and choices can be changed.

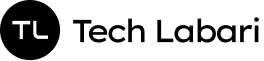

AI, Ayisi began, is no longer an emerging technology to be watched from a distance. It is already embedded in the systems that govern everyday life — filtering job applications, assessing creditworthiness, moderating online speech, and verifying identities at banks and borders.

“Algorithms influence opportunities, safety, visibility, and power,” she said. The question is not whether AI affects women. The question is whether the people designing and regulating it acknowledge how — and whether they intend to do anything about it.

The Scale of the Problem

Dr. Ayisi grounded her argument in data.

Algorithmic harm, she explained, takes several forms: bias built into training data and system design, automated discrimination in high-stakes decisions, AI-amplified harassment and abuse online, and the structural invisibility that results when women — particularly women of colour in Africa — simply are not represented in the datasets that shape how systems perceive the world.

- 96% of deepfake videos online are pornographic – Sensity AI, 2019

- 900%+ rise in deepfake incidents globally between 2019 and 2023 – Sumsub Identity Fraud Report, 2023

- 1 in 3 women globally have experienced some form of online violence – UN Women, 2021

The picture on gendered digital harassment was particularly stark.

AI chatbots are being weaponised to generate targeted abusive content. Recommender systems amplify gendered hate at algorithmic speed. Automated trolling and doxxing have lowered the cost of coordinated intimidation to near-zero.

And crucially, the violence does not stay online: as Ayisi noted, it spills directly into women’s physical lives, silencing participation in public and professional spaces.

Systemic exclusion compounds the harassment problem. Facial recognition technologies misidentify darker-skinned women at significantly higher rates than other groups — yet these same systems are routinely deployed in hospitals, financial institutions, and border control.

Hiring algorithms trained on historical employment data replicate the discrimination that kept women out of certain sectors in the first place. Voice recognition systems, trained predominantly on male speech patterns, perform worse for women. Health algorithms that underrepresent women produce medical decisions calibrated to a body that is not theirs.

AI is often framed as an inevitable technological force — but in reality, it is a human-designed system shaped by policy choices, data, and institutional priorities.

Dr. Aurelia Ayisi, University of Ghana

The Governance Gap — Closer to Home

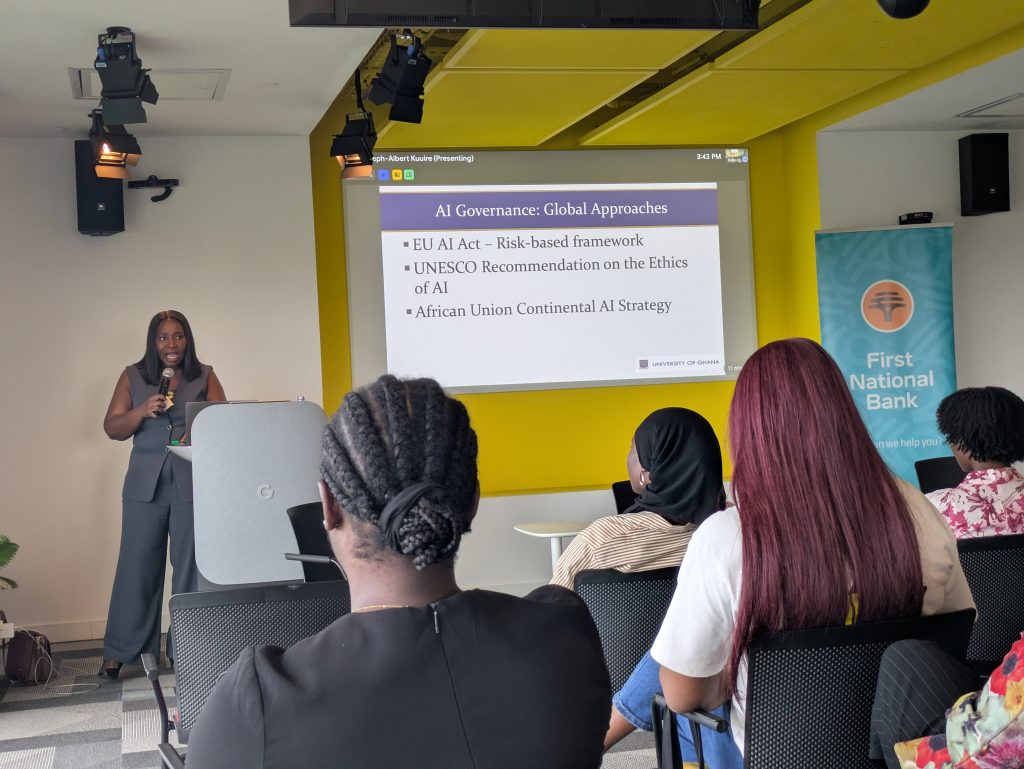

Internationally, some frameworks have begun to emerge. The EU AI Act introduces a risk-based regulatory structure. UNESCO has issued recommendations on AI ethics.

The African Union has a Continental AI Strategy. But Dr. Ayisi was precise about the distance between these frameworks and the lived reality of women in Ghana and across Africa.

The Gender Gaps in AI Governance: Ghana & Africa

- No gender-specific AI regulation exists

- Platform accountability mechanisms remain weak

- AI literacy among women is limited

- Women are underrepresented in AI design and development teams

- Adequate redress systems for digital harm are absent

- Data colonialism leaves African women underrepresented in global training datasets

That final point — data colonialism — deserves particular attention. When the datasets that train AI systems are built overwhelmingly from data generated in the Global North, the result is a technology that encodes Western norms as defaults, treats African contexts as edge cases, and consistently produces worse outcomes for African users.

Women in this context face a compounded disadvantage: marginalised by gender and marginalised by geography simultaneously.

Four Strategic Directions

Rather than stopping at diagnosis, Dr. Ayisi proposed a framework of four strategic directions for closing the justice gap. Taken together, they constitute a comprehensive approach that addresses AI harm at every stage: before systems are built, while they are designed, when they are deployed, and in how citizens relate to them.

1. Red Tape — Regulatory Safeguards Before Deployment

Dr. Ayisi reclaimed the phrase “red tape” — typically used pejoratively — and reframed it as essential protection. She proposed mandatory pre-deployment requirements including safety testing, ethical compliance checks, transparency reporting, data protection rules, and accountability mechanisms.

The analogy was direct: just as construction workers are required to wear protective gear not because it speeds up construction but because the alternative is unacceptable harm, AI systems require safety standards not to slow innovation, but to give it a conscience.

2. Gender-Responsive AI — Designing Inclusion In

The second direction focuses on the design and development process itself. Mandatory gender impact assessments would require developers to actively evaluate how their systems affect women before, not after, deployment.

Diverse datasets, inclusive design teams, transparent reporting mechanisms, and survivor-centred redress systems would ensure that the people most likely to be harmed have a structural role in preventing that harm — not as an afterthought, but as a design requirement.

3. Law & Enforcement — Making Harm Accountable

Good design principles without legal teeth remain voluntary. Ayisi’s third direction calls for platforms to face legal liability for negligence in moderating AI-enabled abuse, for malicious deepfakes — particularly non-consensual intimate imagery — to be explicitly criminalised, for algorithmic transparency laws that require systems to be legible to regulators, and for independent oversight bodies with the authority and resources to act.

4. MIL & AI Literacy — Education as Prevention

The fourth direction addresses the citizen. Integrating AI literacy into Media and Information Literacy (MIL) education would equip people to identify AI-generated content and deepfakes, protect their digital identity and personal data, recognise algorithmic bias and manipulation, report abuse through platform mechanisms, and participate in digital public life with confidence rather than fear.

Dr. Ayisi framed this not as a supplementary measure but as a first line of defence — one that regulatory frameworks alone cannot provide.

A Systems Argument, Not a Grievance

What distinguished Ayisi’s address was its framing. This was not a presentation about victimhood or about technological pessimism. It was a systems argument: that AI produces the outcomes its design choices and governance structures make inevitable, and that different choices would produce different outcomes.

The technology is not inevitable. The harm is not inevitable. What is required is the institutional will to treat gender as a foundational design consideration rather than a late-stage adjustment.

The four strategic directions she proposed are interdependent. Regulation without literacy leaves citizens unable to use the protections they are formally entitled to.

Literacy without enforcement creates awareness of injustice with no pathway to remedy. Gender-responsive design without legal accountability remains aspirational. And legal frameworks without regulatory safeguards built into pre-deployment requirements allow harm to occur faster than accountability can catch up.

“If we want AI to advance justice rather than deepen inequality, gender considerations cannot remain an afterthought. They must be embedded at every stage — from design and development to regulation and governance.”