By the time an AI model learns to speak Yoruba or Wolof, someone has to have first figured out that the text is Yoruba or Wolof.

For years, the foundational plumbing of AI language infrastructure has quietly failed African languages at the very first step — identification. The tools that power language detection were built for European and Asian texts. Feed them a sentence in Swahili or Hausa, and there’s a reasonable chance they’ll tell you it’s French. Or English.

That’s the problem Pleias and the GSMA are now taking a swing at.

The Invisible Bottleneck

Pleias and the GSMA have jointly released CommonLingua — an open-source language identification (LID) model specifically engineered to work with African language data at scale.

It’s the first release under the GSMA’s AI Language Models in Africa, by Africa, for Africa initiative, a coalition whose stated mission is closing the African language gap in AI development.

The gap is significant. Africa has more than 2,000 living languages, yet most AI training pipelines begin with a tool that can’t reliably tell those languages apart. Leading identification systems — fastText, GlotLID, OpenLID — were designed around high-resource European and Asian languages.

Applied to African content, they routinely mislabel text. Even the most advanced frontier models lose roughly 30 percentage points of accuracy when handling African languages compared to major world languages.

Language identification is not glamorous work. But it is foundational. Before a Swahili language model can be built, someone has to correctly tag all the Swahili text first.

That step — unglamorous, invisible, essential — is exactly where the African AI pipeline has been breaking down.

Small Model, Outsize Performance

What makes CommonLingua notable isn’t just what it does — it’s how efficiently it does it.

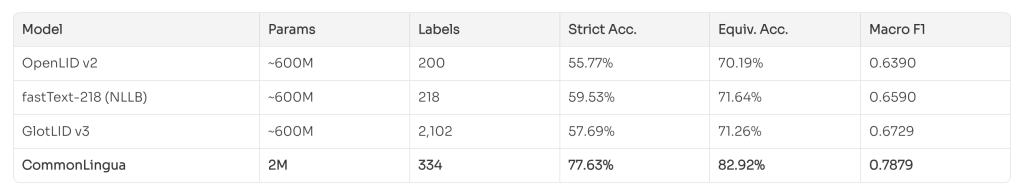

The model runs at just 2 million parameters, ships as an 8MB file, and still outperforms systems that are up to 300 times its size. On the newly released CommonLID benchmark, it achieves 83% accuracy and a macro F1 score of 0.79 — more than 10 percentage points ahead of competing models under comparable testing conditions.

In practice, CommonLingua can process around 20 texts per second on a standard CPU, and up to 3,000 texts per second on a single GPU — numbers that make it viable for large-scale data pipeline work without demanding serious compute infrastructure.

For developers working in resource-constrained environments across the continent, that matters enormously.

The model covers 334 languages in total, with 61 African languages spanning eight language families: Bantu, Niger-Congo and West African, Afro-Asiatic and Semitic, Cushitic and Chadic, Berber, Nilo-Saharan, and pidgins and creoles.

It handles multiple scripts — Latin, Arabic, Ethiopic, N’Ko, Tifinagh — by operating directly on raw UTF-8 byte sequences rather than relying on language-specific tokenisation.

That architectural choice allows it to handle code-mixed and closely related languages more gracefully than its predecessors.

Built on Open Data

The team behind CommonLingua has also been deliberate about its training foundations. The model is trained exclusively on open-licensed and public domain content drawn from the Common Corpus project — pulling from sources including Wikipedia, OpenAlex scientific publications, VOA Africa, and WaxalNLP. All datasets carry permissive licenses.

That openness is intentional. In a space where proprietary data pipelines have historically locked out African language researchers, releasing both the model and its data lineage publicly is a statement as much as a technical decision.

Pierre-Carl Langlais, Co-founder and CTO of Pleias, framed it plainly: African languages are not an edge case — they are the working languages of hundreds of millions of people, and the AI infrastructure around them should reflect that.

CommonLingua, he noted, is deliberately the first brick: you cannot curate what you cannot identify.

The Bigger Picture

CommonLingua’s release arrives at a moment when African AI development is gaining serious institutional momentum — but still fighting for the basic infrastructure that richer language ecosystems take for granted.

Louis Powell, Director of AI Initiatives at the GSMA, pointed to language identification as something so foundational it’s often overlooked — yet its absence has held back progress on African-language datasets and models for years.

The GSMA’s initiative is designed to move the ecosystem past fragmented, one-off projects toward shared infrastructure that can scale.

The next milestone comes at MWC26 Kigali, where the GSMA and partners plan to convene industry leaders to push the African-language AI agenda further.